Use Cases & Plugin Ecosystem

ChronoLog is designed as a platform -- its plugin architecture enables diverse applications to share a common, time-ordered log infrastructure. Here are the current and emerging integration points.

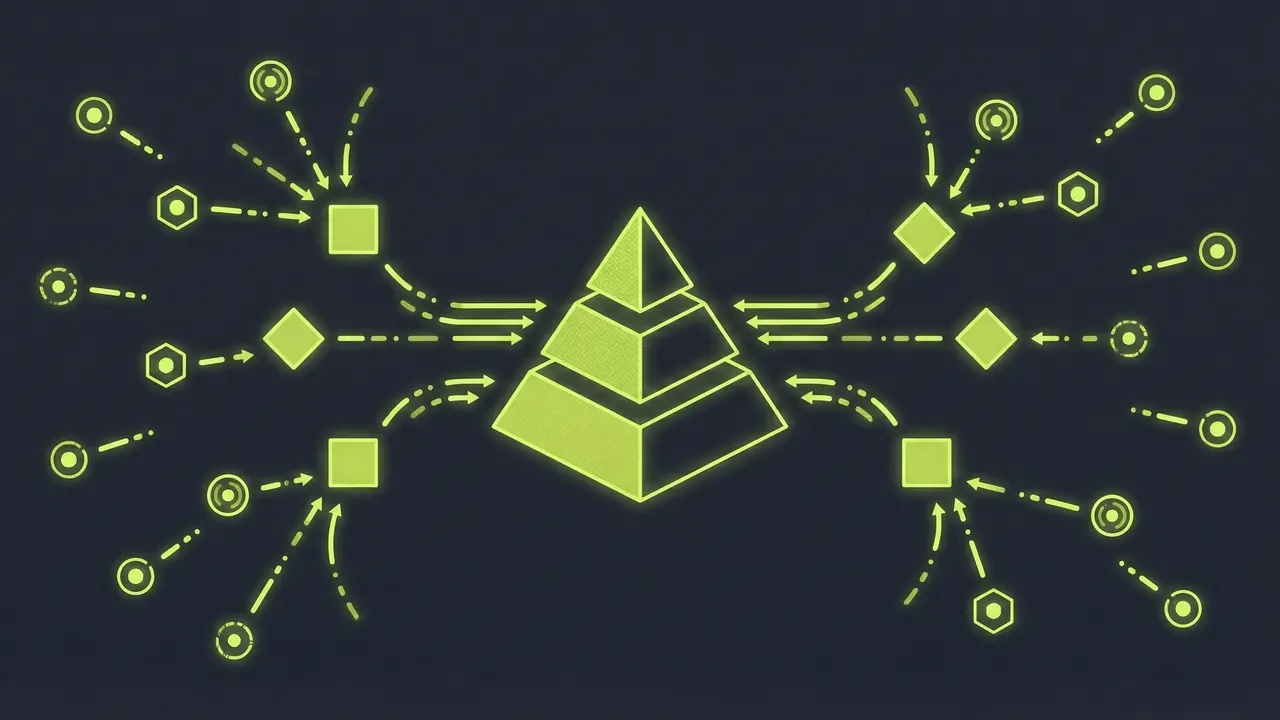

Plugin & Connector Layer

SQL Query Plugin

Query and analyze log data with familiar SQL semantics. Physical time ordering enables efficient temporal range scans without the need for auxiliary indices or pre-built materialized views.

How It Works

The SQL plugin translates SELECT/WHERE/GROUP BY statements into ChronoPlayer replay operations. Because the underlying data is already sorted by time, range queries like "all events between T1 and T2" require no B-tree lookups -- they map directly to sequential reads across the relevant time window.

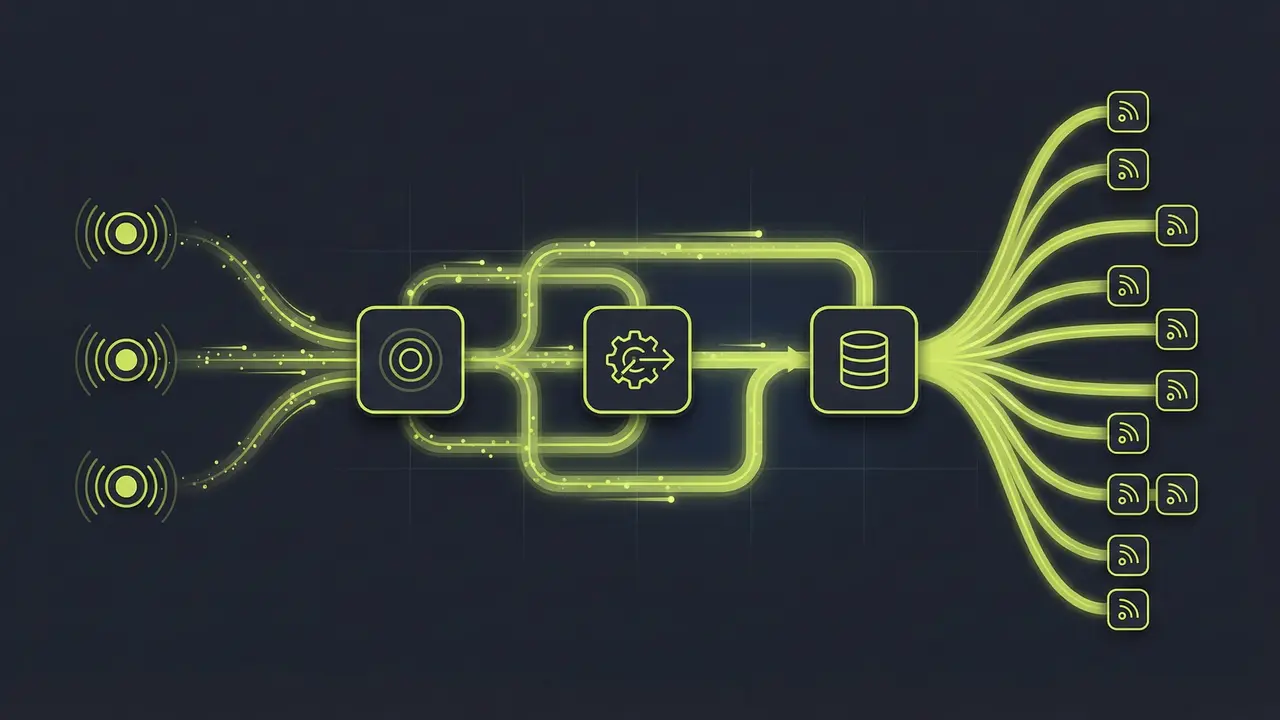

Pub/Sub & Streaming

Publish-subscribe and real-time event streaming built on ChronoGrapher's internal DAG pipeline. IOWarp uses this layer for internal logging and building higher-level stream abstractions.

How It Works

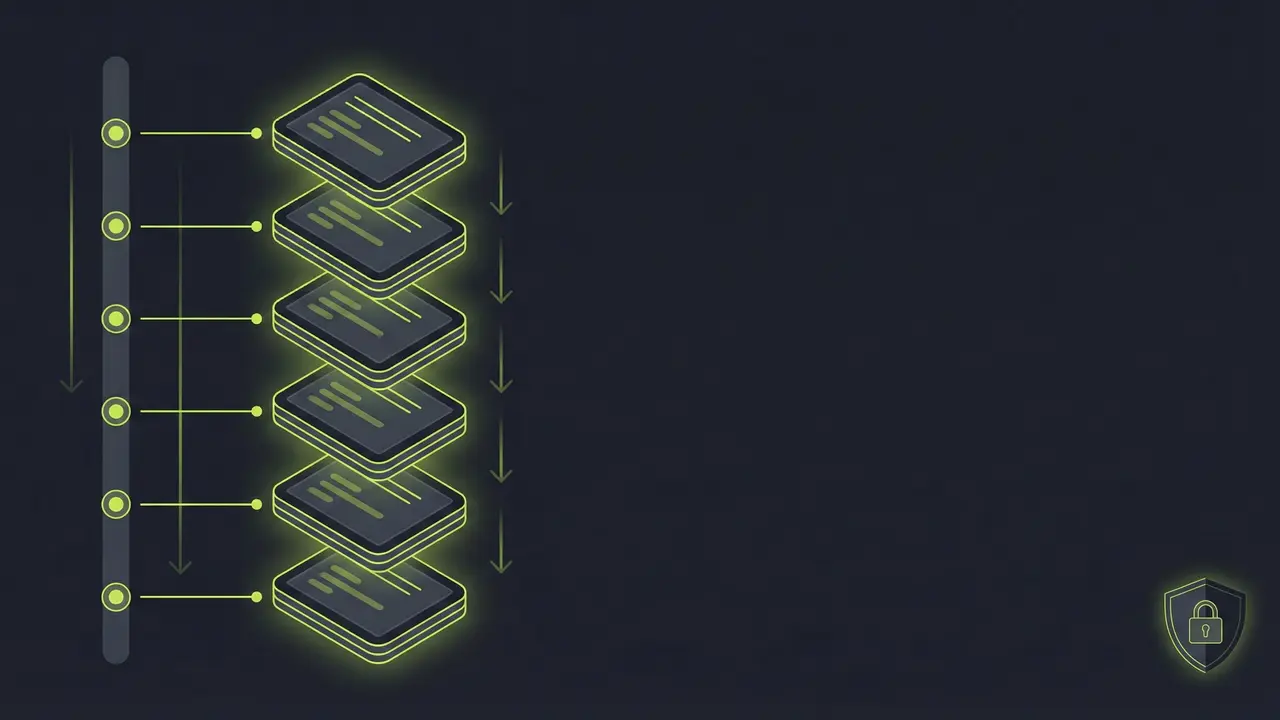

Events flow through a three-stage DAG: event collection from ChronoKeeper, story building (assembling related events into coherent stories), and story writing (persisting to lower tiers). Custom operators can be attached at each stage for filtering, aggregation, or transformation -- enabling real-time analytics on top of the shared log without external stream processing frameworks.

Key-Value Store (ChronoKVS)

Time-series key-value semantics on top of the ordered log. Each key maps to a chronicle, and updates are appended as timestamped entries with built-in ordering guarantees.

How It Works

By building a KV store on ChronoLog's totally ordered log, write ordering comes for free -- no Paxos or Raft consensus overhead. The log-structured merge approach means writes are always sequential, while ChronoLog's time-based ordering provides linearizability across distributed replicas.

AI Agent Memory & MCP Server

ChronoLog as the persistent memory backend for autonomous AI agents. The official MCP (Model Context Protocol) server lets any MCP-compatible agent -- Claude, GPT, custom LLM systems -- create, query, and replay time-ordered logs natively.

How It Works

AI agents need durable, time-ordered memory that persists across sessions and scales across agents. ChronoLog's chronicle abstraction maps naturally to agent memory: each agent (or conversation) gets a chronicle, every action or observation is a timestamped entry, and replay enables full context reconstruction. The MCP server exposes chronicle.create, chronicle.record, chronicle.replay, and chronicle.query as tools, enabling use cases like conversation audit trails, cross-agent shared memory, real-time system monitoring by AI operators, and long-horizon planning with historical context. Part of the IOWarp Agent Toolkit.

ChronoLog MCP Server on GitHub

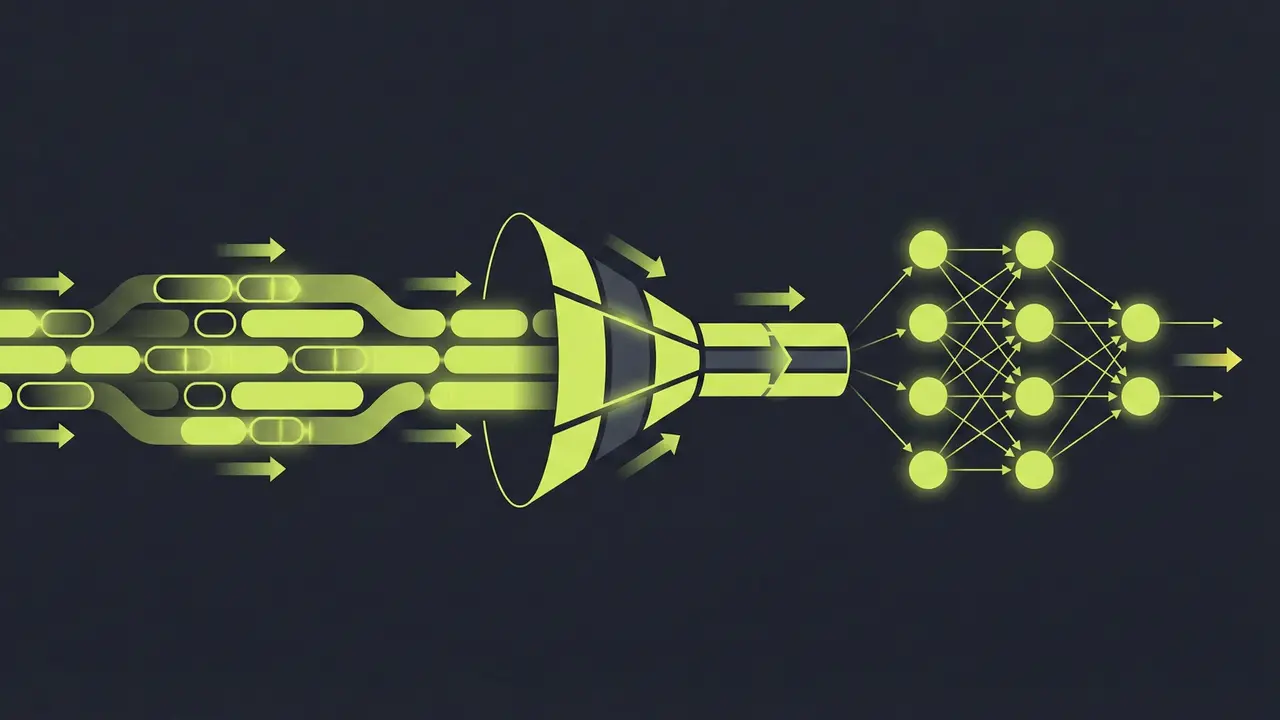

ML & Training Pipelines

TensorFlow integration module for feeding time-ordered data streams directly into training and inference workflows, eliminating separate preprocessing stages.

How It Works

The module provides a native tf.data.Dataset source backed by ChronoLog stories. ChronoKeeper handles real-time feature logging from distributed training jobs, while ChronoPlayer enables multiple training workers to read historical data concurrently through parallel replay.

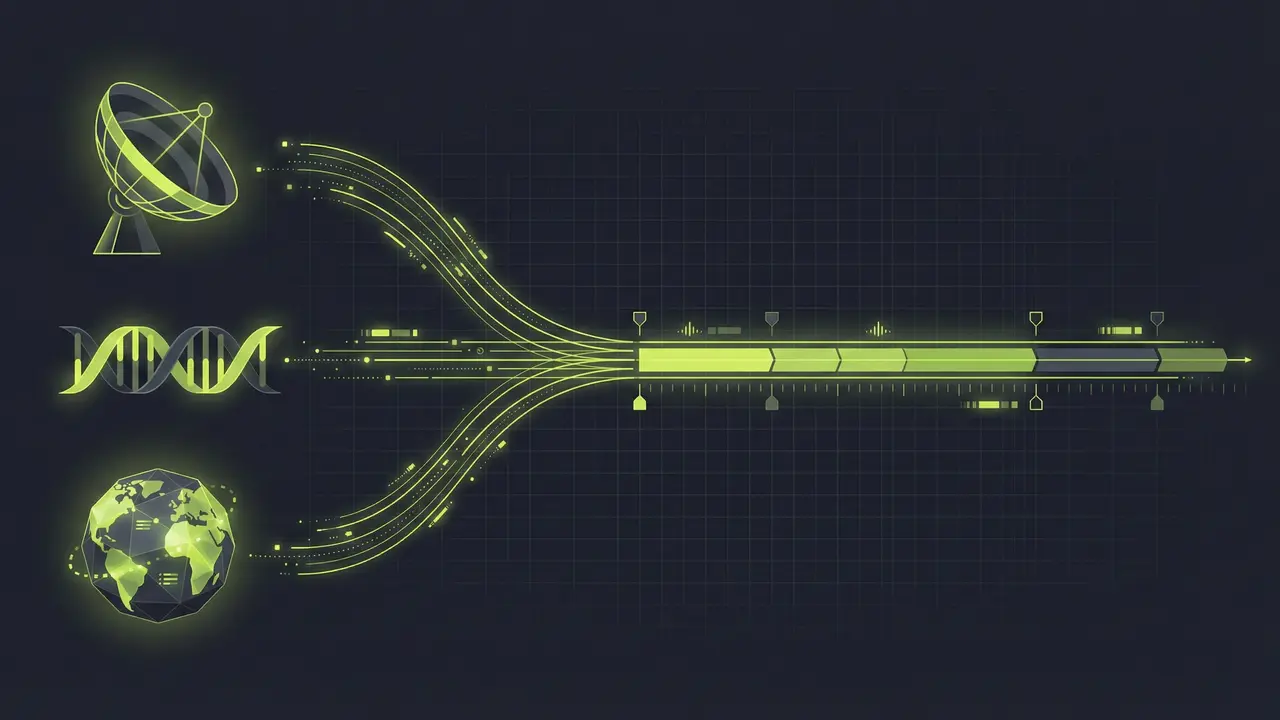

Target Domains

Scientific Computing

Astrophysics, genomics, climate modeling, materials science -- capturing massive telemetry and simulation data across distributed instruments.

AI & Agentic Workflows

LLM context logging, model provenance, and AI agent memory via the official ChronoLog MCP server and the IOWarp Agent Toolkit.

HPC System Monitoring

Distributed telemetry collection across compute clusters, validated on IIT's Ares cluster with SLURM-based deployments.

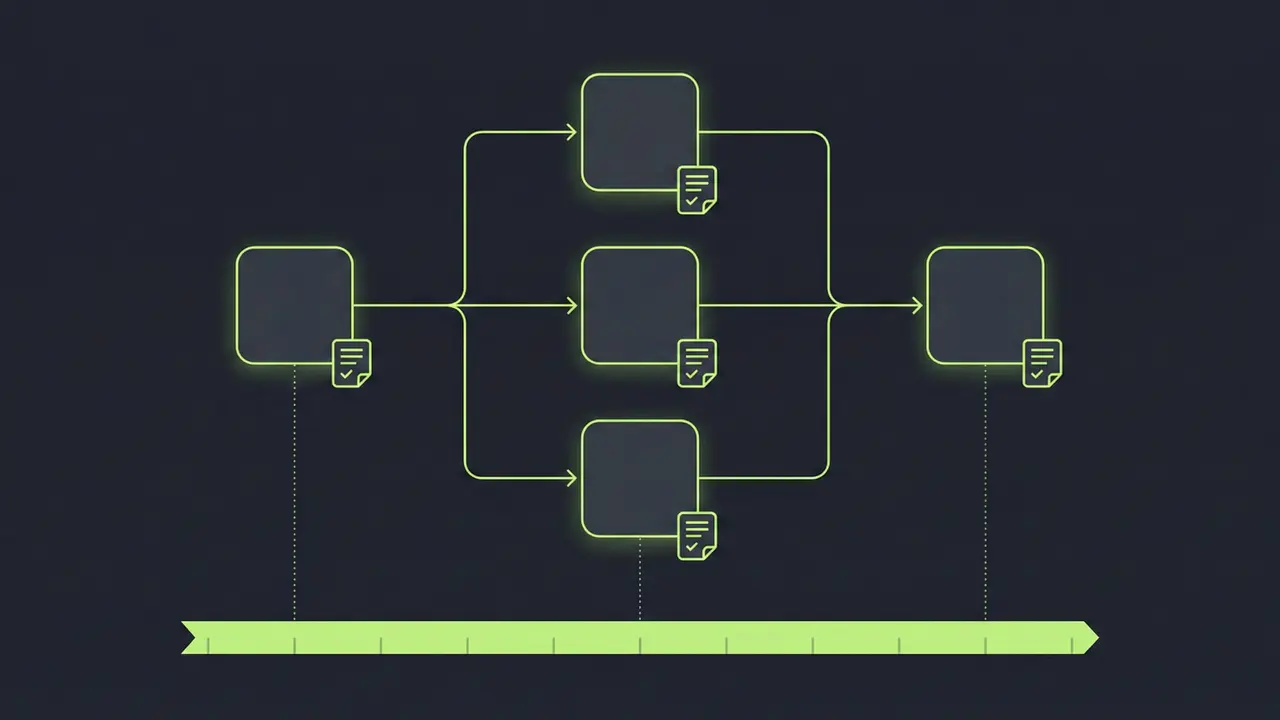

Workflow Orchestration

Integration with Parsl, funcX, and Flux for tracking task execution, data provenance, and workflow-level I/O optimization.

IoT & Edge

Distributed event streams from sensor networks requiring time-synchronized collection and tiered long-term storage.

Financial & Compliance

Activity logging requiring total ordering with immediate visibility for audit trails and regulatory compliance.